Running the Cloud Pak Deployer on AWS (ROSA)🔗

On Amazon Web Services (AWS), OpenShift can be set up in various ways, managed by Red Hat (ROSA) or self-managed. The steps below are applicable to the ROSA (Red Hat OpenShift on AWS) installation. More information about ROSA can be found here: https://aws.amazon.com/rosa/

There are 5 main steps to run the deployer for AWS:

- Configure deployer

- Prepare the cloud environment

- Obtain entitlement keys and secrets

- Set environment variables and secrets

- Run the deployer

See the deployer in action in this video:

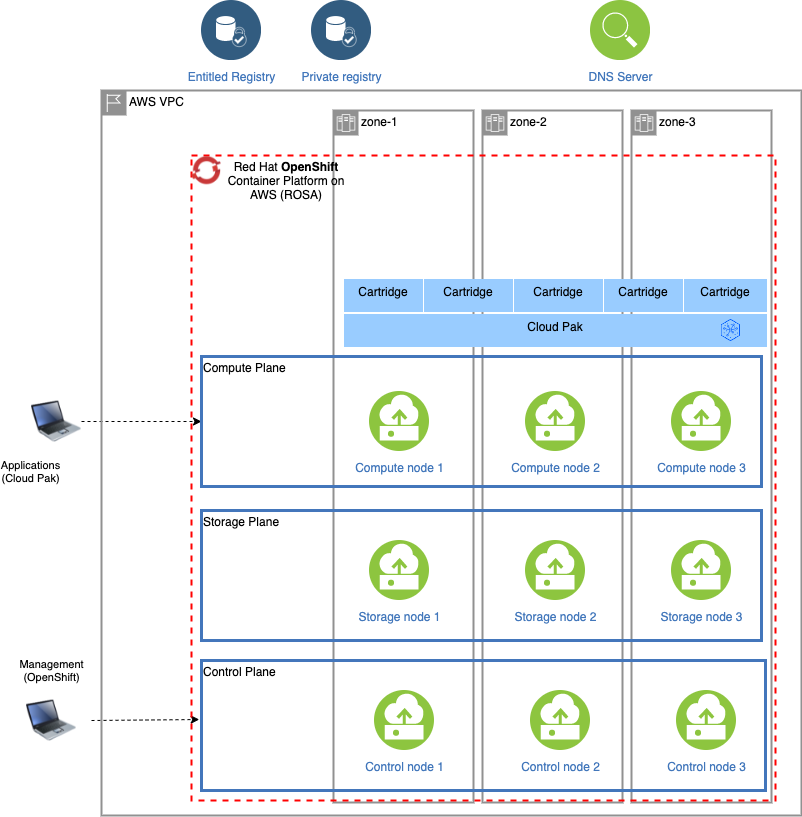

Topology🔗

A typical setup of the ROSA cluster is pictured below:

When deploying ROSA, an external host name and domain name are automatically generated by Amazon Web Services and both the API and Ingress servers can be resolved by external clients. At this stage, one cannot configure the domain name to be used.

1. Configure deployer🔗

Deployer configuration and status directories🔗

Deployer reads the configuration from a directory you set in the CONFIG_DIR environment variable. A status directory (STATUS_DIR environment variable) is used to log activities, store temporary files, scripts. If you use a File Vault (default), the secrets are kept in the $STATUS_DIR/vault directory.

You can find OpenShift and Cloud Pak sample configuration (yaml) files here: sample configuration. For ROSA installations, copy one of ocp-aws-rosa-*.yaml files into the $CONFIG_DIR/config directory. If you also want to install a Cloud Pak, copy one of the cp4*.yaml files.

Example:

mkdir -p $HOME/cpd-config/config

cp sample-configurations/sample-dynamic/config-samples/ocp-aws-rosa-elastic.yaml $HOME/cpd-config/config/

cp sample-configurations/sample-dynamic/config-samples/cp4d-471.yaml $HOME/cpd-config/config/

Set configuration and status directories environment variables🔗

Cloud Pak Deployer uses the status directory to log its activities and also to keep track of its running state. For a given environment you're provisioning or destroying, you should always specify the same status directory to avoid contention between different deploy runs.

export CONFIG_DIR=$HOME/cpd-config

export STATUS_DIR=$HOME/cpd-status

CONFIG_DIR: Directory that holds the configuration, it must have aconfigsubdirectory which contains the configurationyamlfiles.STATUS_DIR: The directory where the Cloud Pak Deployer keeps all status information and logs files.

Optional: advanced configuration🔗

If the deployer configuration is kept on GitHub, follow the instructions in GitHub configuration.

For special configuration with defaults and dynamic variables, refer to Advanced configuration.

2. Prepare the cloud environment🔗

Enable ROSA on AWS🔗

Before you can use ROSA on AWS, you have to enable it if this has not been done already. This can be done as follows:

- Go to https://aws.amazon.com/

- Login to the AWS console

- Search for ROSA service

- Click Enable OpenShift

Obtain the AWS IAM credentials🔗

You will need an Access Key ID and Secret Access Key for the deployer to run rosa commands.

- Go to https://aws.amazon.com/

- Login to the AWS console

- Click on your user name at the top right of the screen

- Select Security credentials. You can also reach this screen via https://console.aws.amazon.com/iam/home?region=us-east-2#/security_credentials.

- If you do not yet have an access key (or you no longer have the associated secret), create an access key

- Store your Access Key ID and Secret Access Key in safe place

Alternative: Using temporary AWS security credentials (STS)🔗

If your account uses temporary security credentials for AWS resources, you must use the Access Key ID, Secret Access Key and Session Token associated with your temporary credentials.

For more information about using temporary security credentials, see https://docs.aws.amazon.com/IAM/latest/UserGuide/id_credentials_temp_use-resources.html.

The temporary credentials must be issued for an IAM role that has sufficient permissions to provision the infrastructure and all other components. More information about required permissions for ROSA cluster can be found here: https://docs.openshift.com/rosa/rosa_planning/rosa-sts-aws-prereqs.html#rosa-sts-aws-prereqs.

An example on how to retrieve the temporary credentials for a user-defined role:

printf "\nexport AWS_ACCESS_KEY_ID=%s\nexport AWS_SECRET_ACCESS_KEY=%s\nexport AWS_SESSION_TOKEN=%s\n" $(aws sts assume-role \

--role-arn arn:aws:iam::678256850452:role/ocp-sts-role \

--role-session-name OCPInstall \

--query "Credentials.[AccessKeyId,SecretAccessKey,SessionToken]" \

--output text)

This would return something like the below, which you can then paste into the session running the deployer.

export AWS_ACCESS_KEY_ID=ASIxxxxxxAW

export AWS_SECRET_ACCESS_KEY=jtLxxxxxxxxxxxxxxxGQ

export AWS_SESSION_TOKEN=IQxxxxxxxxxxxxxbfQ

You must set the infrastructure.use_sts to True in the openshift configuration if you need to use the temporary security credentials. Cloud Pak Deployer will then run the rosa create cluster command with the appropriate flag.

Obtain your ROSA login token🔗

To run rosa commands to manage the cluster, the deployer requires the ROSA login token.

- Go to https://cloud.redhat.com/openshift/token/rosa

- Login with your Red Hat user ID and password. If you don't have one yet, you need to create it.

- Copy the offline access token presented on the screen and store it in a safe place.

If ROSA is already installed🔗

This scenario is supported. To enable this feature, please ensure that you take the following steps:

- Include the environment ID in the infrastrucure definition

{{ env_id }}to match existing cluster -

Create "cluster-admin " password token using the following command:

$ cp-deploy.sh vault set -vs={{env_id}}-cluster-admin-password=[YOUR PASSWORD]

Without these changes, sthe cloud player will fail and you will receive the following error message: "Failed to get the cluster-admin password from the vault".

3. Acquire entitlement keys and secrets🔗

If you want to pull the Cloud Pak images from the entitled registry (i.e. an online install), or if you want to mirror the images to your private registry, you need to download the entitlement key. You can skip this step if you're installing from a private registry and all Cloud Pak images have already been downloaded to the private registry.

- Navigate to https://myibm.ibm.com/products-services/containerlibrary and login with your IBMId credentials

- Select Get Entitlement Key and create a new key (or copy your existing key)

- Copy the key value

Warning

As stated for the API key, you can choose to download the entitlement key to a file. However, when we reference the entitlement key, we mean the 80+ character string that is displayed, not the file.

4. Set environment variables and secrets🔗

export AWS_ACCESS_KEY_ID=your_access_key

export AWS_SECRET_ACCESS_KEY=your_secret_access_key

export ROSA_LOGIN_TOKEN="your_rosa_login_token"

export CP_ENTITLEMENT_KEY=your_cp_entitlement_key

Optional: If your user does not have permanent administrator access but using temporary credentials, you can set the AWS_SESSION_TOKEN to be used for the AWS CLI.

export AWS_SESSION_TOKEN=your_session_token

AWS_ACCESS_KEY_ID: This is the AWS Access Key you retrieved above, often this is something likeAK1A2VLMPQWBJJQGD6GVAWS_SECRET_ACCESS_KEY: The secret associated with your AWS Access Key, also retrieved aboveAWS_SESSION_TOKEN: The session token that will grant temporary elevated permissionsROSA_LOGIN_TOKEN: The offline access token that was retrieved before. This is a very long string (200+ characters). Make sure you enclose the string in single or double quotes as it may hold special charactersCP_ENTITLEMENT_KEY: This is the entitlement key you acquired as per the instructions above, this is a 80+ character string

Warning

If your AWS_SESSION_TOKEN is expires while the deployer is still running, the deployer may end abnormally. In such case, you can just issue new temporary credentials (AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY and AWS_SESSION_TOKEN) and restart the deployer. Alternatively, you can update the 3 vault secrets, respectively aws-access-key, aws-secret-access-key and aws-session-token with new values as they are re-retrieved by the deployer on a regular basis.

Optional: Set the GitHub Personal Access Token (PAT)🔗

In some cases, download of the cloudctl and cpd-cli clients from @IBM will fail because GitHub limits the number of API calls from non-authenticated clients. You can remediate this issue by creating a Personal Access Token on github.com and creating a secret in the vault.

cp-deploy.sh vault set -vs github-ibm-pat=<your PAT>

Alternatively, you can set the secret by adding -vs github-ibm-pat=<your PAT> to the cp-deploy.sh env apply command.

5. Run the deployer🔗

Set path and alias for the deployer🔗

source ./set-env.sh

Optional: validate the configuration🔗

If you only want to validate the configuration, you can run the dpeloyer with the --check-only argument. This will run the first stage to validate variables and vault secrets and then execute the generators.

cp-deploy.sh env apply --check-only --accept-all-licenses

Run the Cloud Pak Deployer🔗

To run the container using a local configuration input directory and a data directory where temporary and state is kept, use the example below. If you don't specify the status directory, the deployer will automatically create a temporary directory. Please note that the status directory will also hold secrets if you have configured a flat file vault. If you lose the directory, you will not be able to make changes to the configuration and adjust the deployment. It is best to specify a permanent directory that you can reuse later. If you specify an existing directory the current user must be the owner of the directory. Failing to do so may cause the container to fail with insufficient permissions.

cp-deploy.sh env apply --accept-all-licenses

You can also specify extra variables such as env_id to override the names of the objects referenced in the .yaml configuration files as {{ env_id }}-xxxx. For more information about the extra (dynamic) variables, see advanced configuration.

The --accept-all-licenses flag is optional and confirms that you accept all licenses of the installed cartridges and instances. Licenses must be either accepted in the configuration files or at the command line.

When running the command, the container will start as a daemon and the command will tail-follow the logs. You can press Ctrl-C at any time to interrupt the logging but the container will continue to run in the background.

You can return to view the logs as follows:

cp-deploy.sh env logs

Deploying the infrastructure, preparing OpenShift and installing the Cloud Pak will take a long time, typically between 1-5 hours,dependent on which Cloud Pak cartridges you configured. For estimated duration of the steps, refer to Timings.

If you need to interrupt the automation, use CTRL-C to stop the logging output and then use:

cp-deploy.sh env kill

On failure🔗

If the Cloud Pak Deployer fails, for example because certain infrastructure components are temporarily not available, fix the cause if needed and then just re-run it with the same CONFIG_DIR and STATUS_DIR as well extra variables. The provisioning process has been designed to be idempotent and it will not redo actions that have already completed successfully.

Finishing up🔗

Once the process has finished, it will output the URLs by which you can access the deployed Cloud Pak. You can also find this information under the cloud-paks directory in the status directory you specified.

To retrieve the Cloud Pak URL(s):

cat $STATUS_DIR/cloud-paks/*

This will show the Cloud Pak URLs:

Cloud Pak for Data URL for cluster pluto-01 and project cpd:

https://cpd-cpd.apps.pluto-01.pmxz.p1.openshiftapps.com

The admin password can be retrieved from the vault as follows:

List the secrets in the vault:

cp-deploy.sh vault list

This will show something similar to the following:

Secret list for group sample:

- aws-access-key

- aws-secret-access-key

- ibm_cp_entitlement_key

- rosa-login-token

- pluto-01-cluster-admin-password

- cp4d_admin_zen_40_pluto_01

- all-config

You can then retrieve the Cloud Pak for Data admin password like this:

cp-deploy.sh vault get --vault-secret cp4d_admin_zen_40_pluto_01

PLAY [Secrets] *****************************************************************

included: /cloud-pak-deployer/automation-roles/99-generic/vault/vault-get-secret/tasks/get-secret-file.yml for localhost

cp4d_admin_zen_40_pluto_01: gelGKrcgaLatBsnAdMEbmLwGr

Post-install configuration🔗

You can find examples of a couple of typical changes you may want to do here: Post-run changes.